We Found Verifiable Algebraic Structure Inside LLMs

Do LLMs internally encode ontological relations in a formally verifiable algebraic structure?

This is the question at the heart of our latest research — and the answer has significant implications for the future of Model-Based Human-Machine Collaboration.

Our Mission and the Challenge

At Mgnite Inc., our mission is to reduce ambiguity between humans and machines through models. As the systems that shape our world grow in complexity, the ability to represent knowledge with precision is not merely a technical goal — it is a prerequisite for meaningful collaboration, and ultimately, for human agency.

The rise of large language models (LLMs) has transformed how we interact with machines. Yet a critical question has remained unanswered: do LLMs reason formally, or do they merely approximate reasoning statistically? This distinction matters enormously for MBSE, SysML v2, and any domain where logical consistency is not optional.

The Research

We are pleased to announce the release of our paper:

“Controlling Logical Collapse in LLMs via Algebraic Ontology Projection over 𝔽₂”

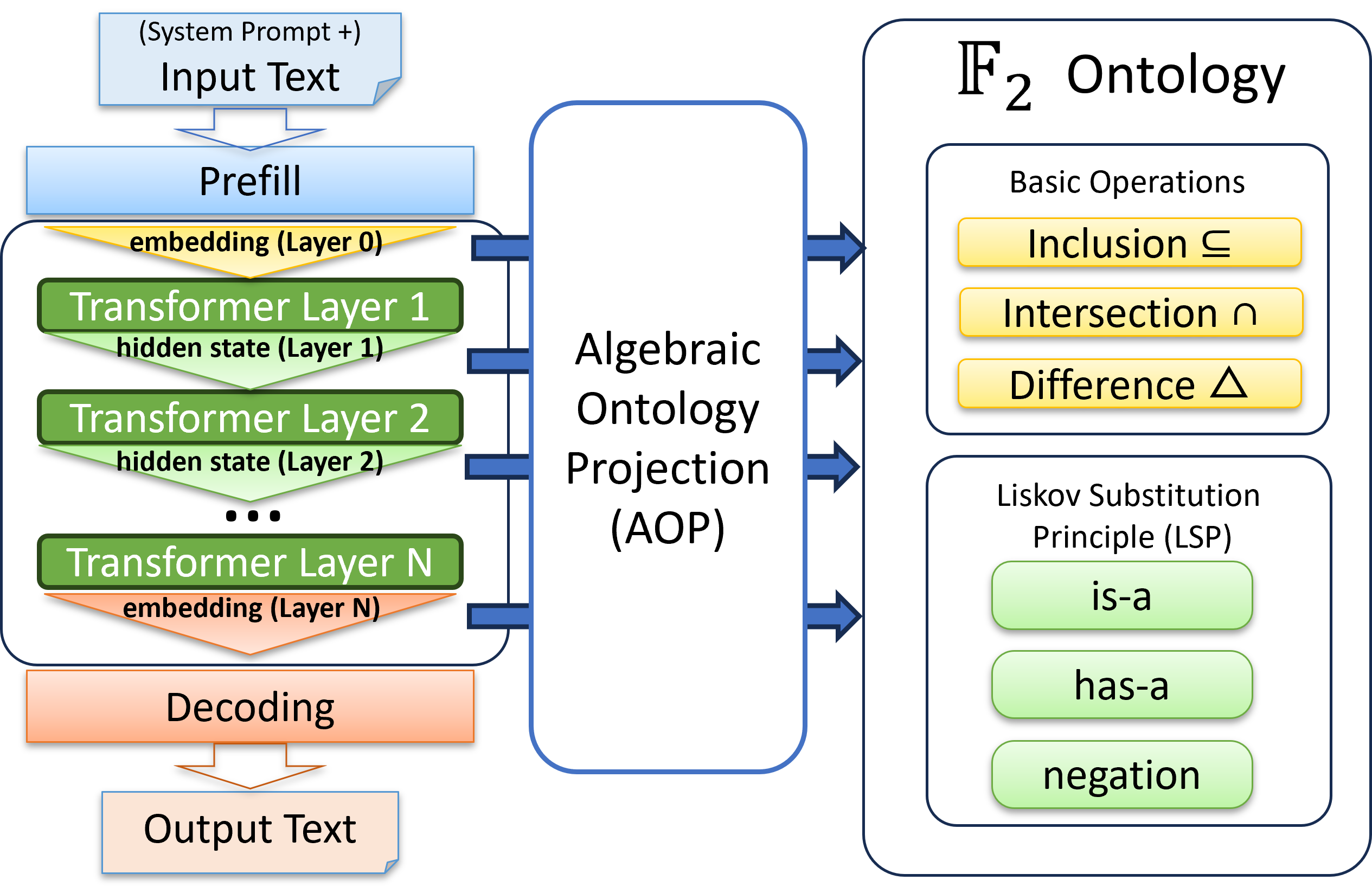

This work introduces Algebraic Ontology Projection (AOP), a method that projects LLM hidden states into the Galois Field 𝔽₂ under formal ontological constraints — the same is-a and has-a relations that underpin KerML, SysML v2, and formal specification languages. This would be the key technology to enable direct verification of logical consistency in the model’s internal representations.

The results were striking:

- 90.91% zero-shot accuracy on unseen ontological relations — with no model fine-tuning, trained on just 42 relational facts, none of which appear in the evaluation

- A new metric, Semantic Crystallisation (SC), that quantifies algebraic consistency layer by layer

- Empirical demonstration that system prompts function as algebraic boundary conditions — configuring the model’s internal logical structure, not merely its output behaviour

- Identification of Late-layer Collapse, a systematic degradation of logical consistency in final transformer layers

The most important finding is perhaps the simplest: we did not teach the model this structure. We found it already there.

Why This Matters for MBSE and SysML v2

In recent years, the MBSE community has faced a fundamental tension: LLMs are powerful, but their logical reliability is uncertain. Apple’s recent study on reasoning reliability put this concern in sharp focus — LLMs can exhibit logical collapse under pressure.

AOP provides a mathematically grounded tool to detect, measure, and control exactly this failure mode — particularly during model validation and reasoning tasks. The algebraic structure we extract corresponds directly to the ontological primitives of KerML and SysML v2. This is a first step toward LLMs that are not merely empirically useful in MBSE workflows, but formally grounded in the same structures that make those workflows rigorous.

This is not a claim that the problem is solved. It is a claim that the foundation now exists.

A Milestone Toward MHMC

Our mission is to ensure that humans — engineers and non-engineers alike — can understand and collaborate with machines without ambiguity. Machines that reason without formal grounding are machines that cannot be fully understood. Machines that cannot be fully understood cannot be truly collaborated with — particularly in safety-critical and engineering domains.

AOP is a step toward bridging that gap: between the expressive power of LLMs and the formal precision that MHMC demands.

The paper is available via this LINK.

We welcome enquiries from researchers, partners, and practitioners working at the intersection of MBSE, formal methods, and AI.