AOP (Algebraic Ontology Projection) exposes an Inside-Out mental map of LLM

In this artile, let me walk you through the highlight of our research — intuitively. What’s actually happening inside an LLM when it reasons?

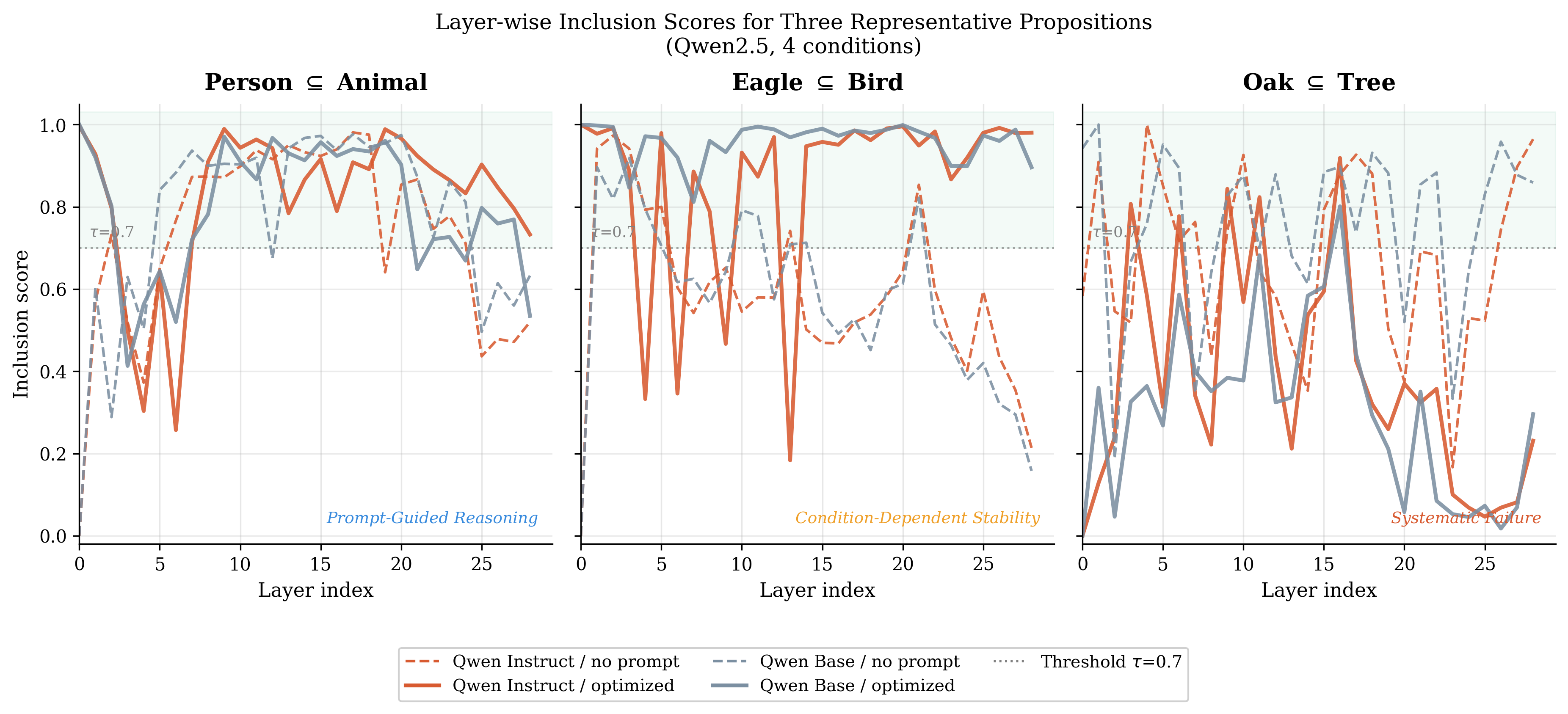

This figure of the paper shows something we rarely get to see: the LLM’s internal judgment — layer by layer — on whether one concept belongs to another. No text output. No softmax. Just the raw algebraic structure forming deep inside the model.

Three stories in one figure:

🔵 Left — “Is a Person an Animal?” Without guidance, the model hedges. The lines wander. It knows the answer, but withholds commitment — much like a thoughtful person who pauses before making a philosophically loaded claim. Give it the right prompt, and the judgment snaps into clarity.

🟡 Centre — “Is an Eagle a Bird?” With the right model and prompt: rock solid throughout. Without them: the answer holds for a while — then collapses in the final layers. We call this Late-layer Collapse.

🔴 Right — “Is an Oak a Tree?” The model never settles. “Oak” carries too many meanings — a place, a surname, a monster in a game. The internal map is torn between worlds.

This is AOP: an Inside-Out mental map of the LLM. Not what the model says. What the model thinks — before it speaks.

And the prompt? It’s not just input. It sets the rules of thought itself.